Google’s Penguin 3.0 update affected less than 1% of U.S./English queries in 2014. Granted, Google processes over 40,000 search queries every second, which translates to a staggering 1.2 trillion searches per year worldwide, so Penguin 3.0 ultimately hit 12 billion search queries.

What’s scary though, is that Penguin 3.0 wasn’t too bad. Penguin 1.0 hit 3.1% of U.S./English queries, or 37.2 billion search queries. The quasi-cataclysmic update changed the topography of SEO, leaving digital agencies forever scarred by the memory.

Now, Google is supposedly going to roll Penguin 4.0 out in the imminent future. Everyone expected the monolithic tech company to launch the update in 2015, but the holidays delayed it to 2016. Then, everyone expected it to drop sometime in Q1 2016.

However, the SEO world still waits with bated breath.

Why is everyone so afraid of the Big Bad Penguin?

Google first launched the Penguin Update in April 2012 to catch sites spamming its search results, specifically the ones who used link schemes to manipulate search rankings. In other words, it hunted down inorganic links, the ones bought or placed solely for the sake of improving search rankings.

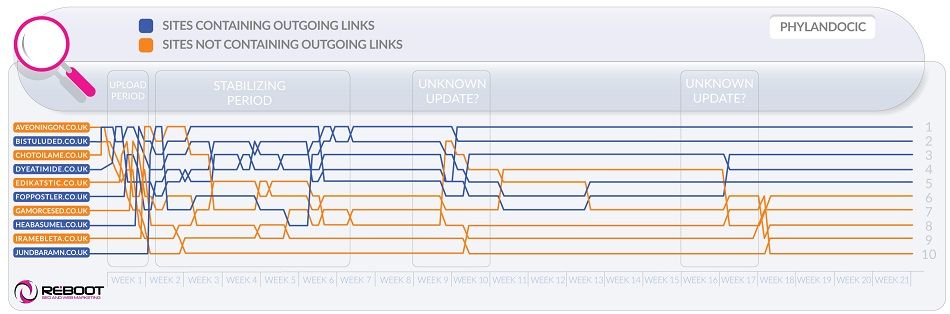

In the time it took for Penguin 2.0 and 3.0 to come out, digital agencies wised up. They heard the message loud and clear. Once a new Penguin update comes out, they know they have to take action to get rid of bad links.

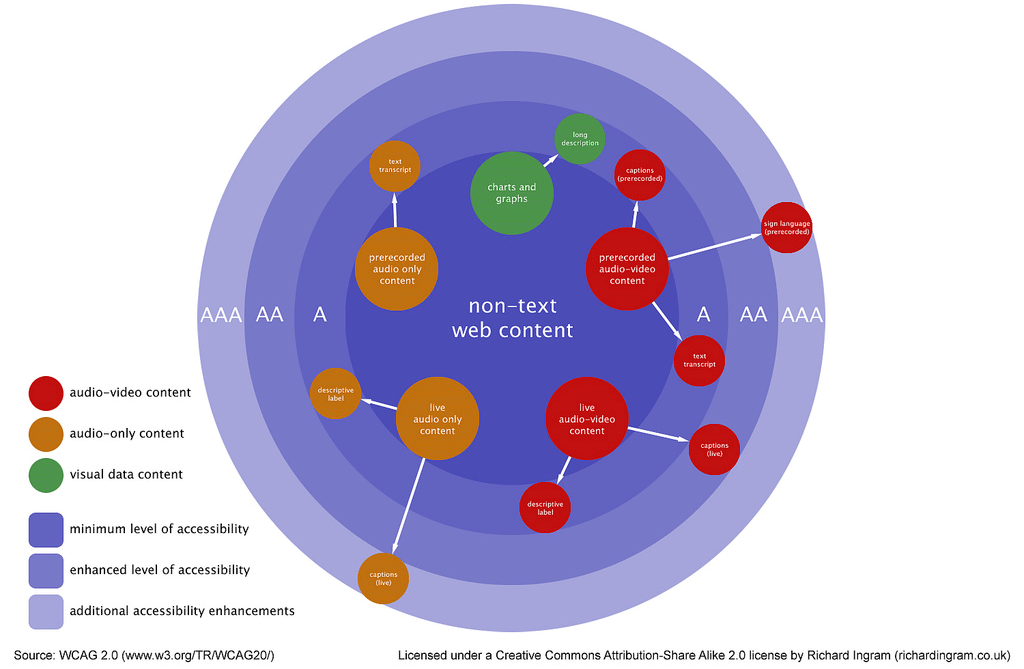

Google targets links that come from poor quality sites, have little to no relevancy to the backlinked site, have overly optimized anchor text, are paid for, and/or are keyword rich.

However, what makes Penguin truly terrifying isn’t only the impact it can have on a site’s ranking, but on an honest marketing campaign.

Earning backlinks is tough. That’s why some stoop to paying for them or working with shady link networks. The most tried-and-true way to earn backlinks is guest blogging, which is not only difficult, but time consuming, as well.

Although Google usually ignores backlinks earned by guest blogging, that’s not to say that they’re completely Penguin-proof. Your guest blogging backlinks may have become toxic in an unlikely, but entirely possible scenario.

In other words, people are so afraid of Penguin because it can ruin a lot of the hard work you’ve put into a campaign.

How can you slay the fearsome Penguin?

Luckily, there are a number of preventative measures you can take to avoid Penguin’s wrath.

The first thing you’re going to want to do is look at your backlink profile using Open Site Explorer, Majestic SEO, or Ahrefs. Look at the total number of links, the number of unique domains, the difference between the amount of linking domains and total links, the anchor text usage and variance, page performance, and link quality.

If this sounds like too much work, there are tools that will automate the analysis process for your and apply decision rules for a fee, such as HubShout and Link Detox.

If you find a bunch of toxic links – the backlinks that came from link networks, unrelated domains, sites with malware warnings, spammy sites, and sites with a large number of external links – you need to take action before the Penguin strikes.

Your next step is to remove the links manually. Contact the site’s owner to request he or she remove the links. Failing that, you can always disavow them. This tells Google not to count the links when it determines PageRank and search engine ranking.

How can you recover after a Penguin attack?

If Penguin 4.0 does wind up pecking your campaign to the verge of death, don’t worry. You can recover.

Analyzing your backlink profile and removing toxic links – what you should do to prevent a Penguin issue – are also the steps you need to take to recover.

However, the thing about disavowing a link is that it may actually hurt your campaign. No one but the Google hivemind really knows whether or not a link helps or hurts. You can only make an educated guess. Despite this risk, you still need to disavow any links that appear to be toxic.

The next logical step after purging your backlink profile is to build it up again. Although you should never stop trying to earn backlinks, it’s a smart idea to redouble your efforts after a Penguin attack.

Guest blogging isn’t the only way to earn backlinks, either. Entrepreneur offers a great list of creative ways to get people to link to your site, such as:

- Broken-Link Building: Check a site for broken links, and compile them into a list. Then, take said list to the webmaster, and suggest other websites to replace the links, one of which being yours.

- Infographics: The thing about infographics is that they’re more shareable than blogs. Research shows that 40% of people respond better to visual information than plain text. The idea here is exactly the same as the idea of content marketing. You create a great piece of content – an infographic, in this case – and people are going to share it. In the case of an infographic, other sites and blogs could repost it. Success isn’t guaranteed, but this method can work.

- Roundups: Similar to guest blogging, reaching out to bloggers and sites that run weekly or monthly roundups is a great way to get some backlinks. Search your keyword and “roundup,” and limit the results to the past week or month. Once you’ve found a few, send the webmaster a link to one of your guides, tutorials, or other pieces of content (like, say, a new infographic). Sites that run roundups are constantly looking for content, so there’s a good chance they’ll include your work in their next edition.

What’s next?

So long as you take these precautionary steps, you’ll be fine, whenever Penguin does rear its beaked head.

The article The Penguin in the room: what to do until Google rolls out its latest update was first seen from https://searchenginewatch.com